|

#loss_value is the loss between the predicted value and the actual value #target is the actual value in our dataset #predicted_value is the prediction from our neural network The function returned from the code above can be used to calculate how far a prediction is from the actual value using the format below.

Let’s look at how to add a Mean Square Error loss function in PyTorch. This makes adding a loss function into your project as easy as just adding a single line of code. All PyTorch’s loss functions are packaged in the nn module, PyTorch’s base class for all neural networks. PyTorch comes out of the box with a lot of canonical loss functions with simplistic design patterns that allow developers to easily iterate over these different loss functions very quickly during training.

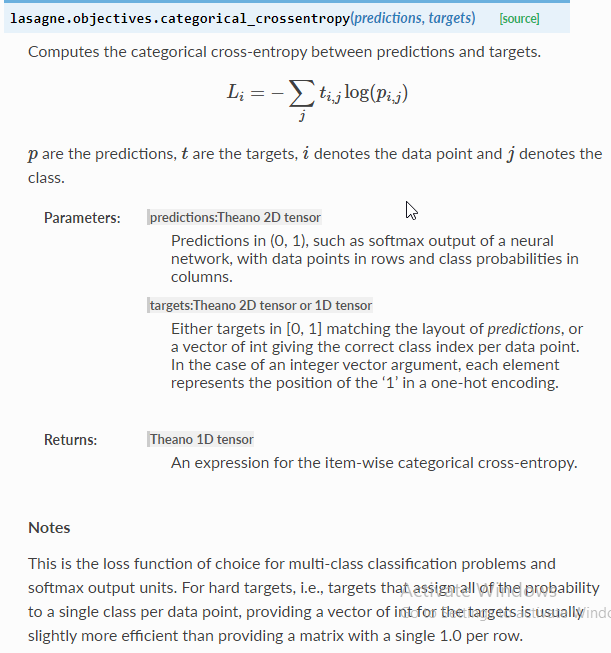

We are going to uncover some of PyTorch's most used loss functions later, but before that, let us take a look at how we use loss functions in the world of PyTorch. Sometimes, the mathematical expressions of loss functions can be a bit daunting, and this has led to some developers treating them as black boxes. By doing so, we increase the probability of our model making correct predictions, something which probably would not have been possible without a loss function.ĭifferent loss functions suit different problems, each carefully crafted by researchers to ensure stable gradient flow during training. The importance of loss functions is mostly realized during training, where we nudge the weights of our model in the direction that minimizes the loss. When our model is making predictions that are very close to the actual values on both our training and testing dataset, it means we have a quite robust model.Īlthough loss functions give us critical information about the performance of our model, that is not the primary function of loss function, as there are more robust techniques to assess our models such as accuracy and F-scores. Technically, how it does this is by measuring how close a predicted value is close to the actual value. We stated earlier that loss functions tell us how well a model does on a particular dataset. Now that we have a high-level understanding of what loss functions are, let’s explore some more technical details about how loss functions work. We will further take a deep dive into how PyTorch exposes these loss functions to users as part of its nn module API by building a custom one. In this article, we are going to explore these different loss functions which are part of the PyTorch nn module.

Speaking of types of loss functions, there are several of these loss functions which have been developed over the years, each suited to be used for a particular training task.

Knowing how well a model is doing on a particular dataset gives the developer insights into making a lot of decisions during training such as using a new, more powerful model or even changing the loss function itself to a different type. In layman terms, a loss function is a mathematical function or expression used to measure how well a model is doing on some dataset. Loss functions are fundamental in ML model training, and, in most machine learning projects, there is no way to drive your model into making correct predictions without a loss function.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed